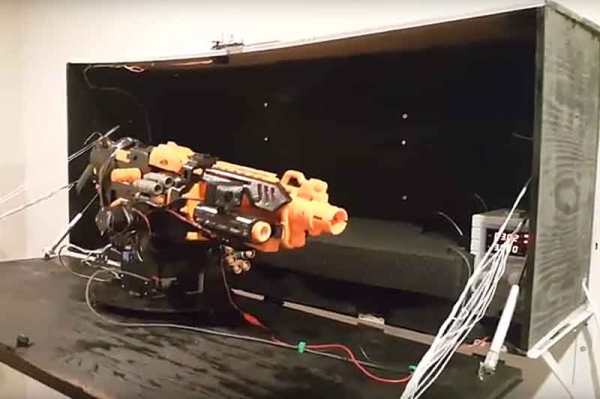

Meet [Tanner]. [Tanner] is a hacker who also appreciates the security of their home while they’re out of town. After doing some research about home security, they found that it doesn’t take much to keep a house from being broken into. It’s true that truly determined burglars might be more difficult to avoid. But, for the opportunistic types who don’t like having their appendages treated like a chew toy or their face on the local news, the steaks are lowered. All it might take is a security camera or two, or a big barking dog to send them on their way. Rather than running to the local animal shelter, [Tanner] used parts that were already sitting around to create a solution to the problem: A computer vision triggered virtual dog.

Continue reading “CV Based Barking Dog Keeps Home Secure, Doesn’t Need Walking”