Growing up, ours was a family of handwritten notes for every occasion. The majority were left on the kitchen counter next to the sink, or in a particular spot on the all-purpose table in the breakfast nook. Whether one was professing their familial love and devotion on the back of a Valpak coupon, or simply communicating an intent to be home before dinnertime, the words were generally immortalized in BiC on whatever paper was available, and timestamped for the reader’s information. You may have learned cursive in school, but I was born in it — molded by it. The ascenders and descenders betray you because they belong to me.

Both of my parents always seemed to be incapable of printing in anything other than all caps, so I actually preferred to see their cursive most of the time. As a result, I could copy read it quite easily from an early age. Well, I don’t think I ever had any hope of imitating Dad’s signature. But Mom’s on the other hand — like I said in the first installment, it was important for my signature to be distinct from hers, given that we have the same name — first, middle, and last. But I could probably still bust out her signature if it came down to something going on my permanent record.

While my handwriting was sort of naturally headed towards Mom’s, I was more interested in Dad’s style and that of my older brother. He had small caps handwriting down to an art, and my attempts to copy it have always looked angry and stilted by comparison. In addition, my brother’s cursive is lovely and quick, while still being legible.

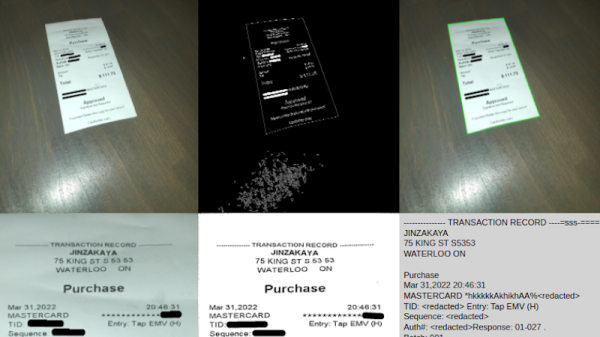

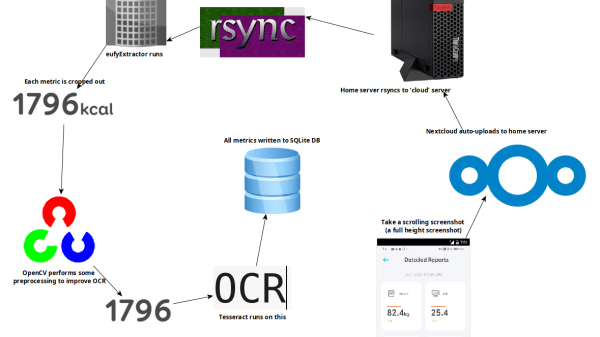

First of all, while OCR can be reliable, it needs the right conditions. One thing that ended up being a big problem was the way the app appends units (kg, %) after the numbers. Not only are they tucked in very close, but they’re about half the height of the numbers themselves. It turns out that mixing and matching character height, in addition to snugging them up against one another, is something tailor-made to give OCR reliability problems.

First of all, while OCR can be reliable, it needs the right conditions. One thing that ended up being a big problem was the way the app appends units (kg, %) after the numbers. Not only are they tucked in very close, but they’re about half the height of the numbers themselves. It turns out that mixing and matching character height, in addition to snugging them up against one another, is something tailor-made to give OCR reliability problems.