Detecting a signal pulse is usually basic electronics, but you start to find more complications when you need to time the signal’s arrival in the picoseconds domain. These include the time-walk effect: if your circuit compares the input with a set threshold, a stronger signal will cross the threshold faster than a weaker signal arriving at the same time, so stronger signals seem to arrive faster. A constant-fraction discriminator solves this by triggering at a constant fraction of the signal pulse, and [Michael Wiebusch] recently presented a hacker-friendly implementation of the design (open-access paper).

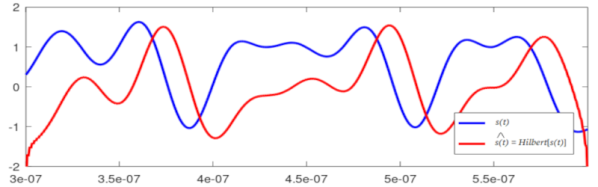

A constant-fraction discriminator splits the input signal into two components, inverts one component and attenuates it, and delays the other component by a predetermined amount. The sum of these components always crosses zero at a fixed fraction of the original pulse. Instead of checking for a voltage threshold, the processing circuitry detects this zero-crossing. Unfortunately, these circuits tend to require very fast (read “expensive”) operational amplifiers.

This is where [Michael]’s design shines: it uses only a few cheap integrated circuits and transistors, some resistors and capacitors, a length of coaxial line as a delay, and absolutely no op-amps. This circuit has remarkable precision, with a timing standard deviation of 60 picoseconds. The only downside is that the circuit has to be designed to work with a particular signal pulse length, but the basic design should be widely adaptable for different pulses.

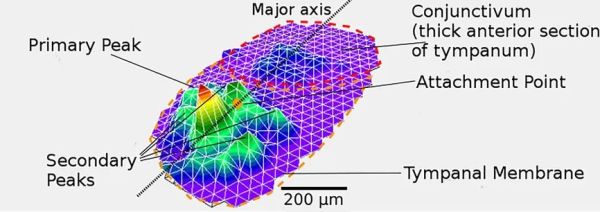

[Michael] designed this circuit for a gamma-ray spectrometer, of which we’ve seen a few examples before. In a spectrometer, the discriminator would process signals from photomultiplier tubes or scintillators, such as we’ve covered before.