There are few things as frustrating when you’re trying to get some serious hacking done than intruders repeatedly showing up without permission. [All Parts Combined] has the solution for you, with a Kinect-based robotic sentry turret to keep them at bay.

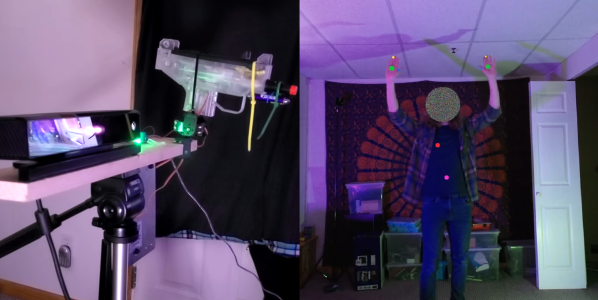

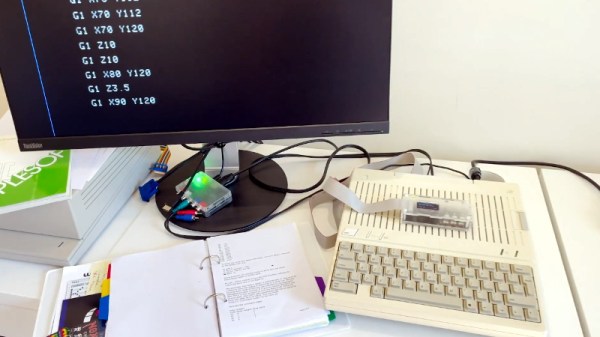

The system consists of a Microsoft Kinect V2 connected to a PC, which runs an app to do all the processing, and outputs the targeting information to an Arduino over serial. The Arduino controls a simple 2-axis servo mount with an electric airsoft gun zip-tied to it. The trigger switch is replaced with a relay, also connected to the Arduino.

The Kinect V2 comes with SDKs that really simplify tracking human movement, and outputs the data in an easy-to-use format. [All Parts Combined] used the SDK in Unity, which allows him to choose which body parts to track. He added scripts that detect a few basic gestures, issues voice commands, and generates the serial commands for the Arduino. The servo angles are calculated with simple geometry, using XY coordinates of the target received from the SDK, and the known distance between the Kinect and turret. When an intruder enters the Kinect’s field of view it immediately starts aiming at the intruder’s heart, issues a “Hands Up!” command, and tells the intruder to leave. If the intruder doesn’t comply, it starts an audible countdown before firing. [All Parts Combined] also added a secret disarming gesture (double hand pistols), which turns the turret into an apologetic comrade. All it needs is a Portal-inspired enclosure.

It’s a fun project that illustrates how the Kinect can make complex computer vision tasks relatively simple. Unfortunately the V2 is no longer in production, having been replaced by the more expensive, developer focused Azure Kinect. We’ve covered several Kinect-based projects, including a 3D room scanner and a robotic basketball hoop.

Continue reading “Automated Sentry Turret For Your Secret Lab”