The current wave of excitement around machine learning kicked off when graphics processors were repurposed to make training deep neural networks practical. Nvidia found themselves the engine of a new revolution and seized their opportunity to help push frontiers of research. Their research lab in Seattle will focus on one such field: making robots smart enough to work alongside humans in an IKEA kitchen.

Today’s robots are mostly industrial machines that require workspaces designed for robots. They run day and night, performing repetitive tasks, usually inside cages to keep squishy humans out of harm’s way. Robots will need to be a lot smarter about their surroundings before we could safely dismantle those cages. While there are some industrial robots making a start in this arena, they have a hard time justifying their price premium. (Example: financial difficulty of Rethink Robotics, who made the Baxter and Sawyer robots.)

So there’s a lot of room for improvement in this field, and this evolution will need a training environment offering tasks of varying difficulty levels for robots. Anywhere from the rigorous structured environment where robots work well today, to a dynamic unstructured environment where robots are hopelessly lost. Lab lead Dr. Dieter Fox explained how a kitchen is ideal. A meticulously cleaned and organized kitchen is very similar to an industrial setting. From there, we can gradually make a kitchen more challenging for a robot. For example: today’s robots can easily pick up a can with its rigid regular shape, but what about a half-full bag of flour? And from there, learn to pick up a piece of fresh fruit without bruising it. These tasks share challenges with many other tasks outside of a kitchen.

This isn’t about building a must-have home cooking robot, it’s about working through the range of challenges shared with common kitchen tasks. The lab has a lot of neat hardware, but its success will be measured by the software, and like all research, published results should be reproducible by other labs. You don’t have a high-end robotics lab in your house, but you do have a kitchen. That’s why it’s not just any kitchen, but an IKEA kitchen, to take advantage of the fact they are standardized, affordable, and available around the world for other robot researchers to benchmark against.

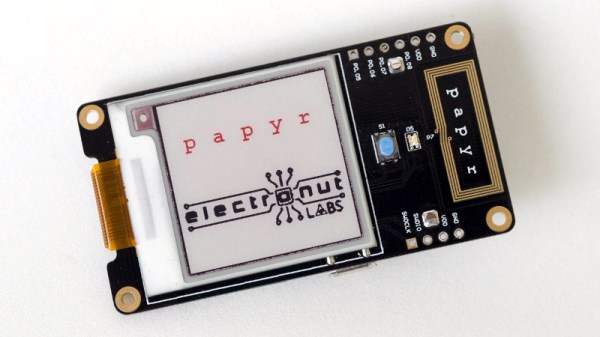

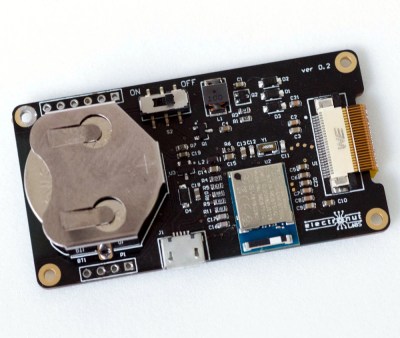

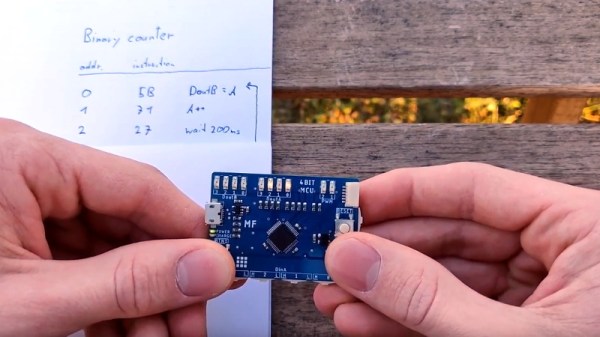

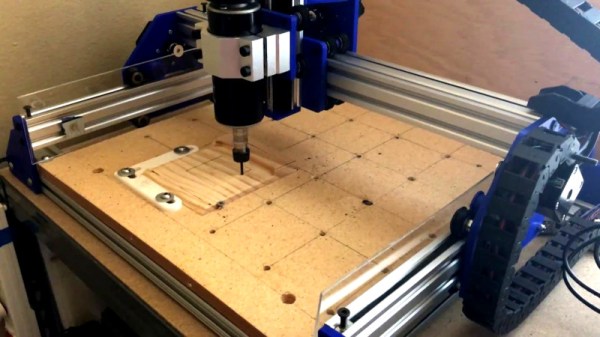

Most of us can experiment in a kitchen, IKEA or not. We have access to all the other tools we need: affordable AI hardware from Google, from Beaglebone, and from Nvidia. And we certainly have no shortage of robot arms and manipulators on these pages, ranging from a small laser-cut MeArm to our 2018 Hackaday Prize winner Dexter.