The languages we speak influence the way that we see the world, in ways most of us may never recognize. For example, researchers report seeing higher savings rates among people whose native language has limited capacity for a future tense, and one Aboriginal Australian language requires precise knowledge of cardinal directions in order to speak at all. And one Alaskan Inuit language called Iñupiaq is using its inherent visual nature to reshape the way children learn and use mathematics, among other things.

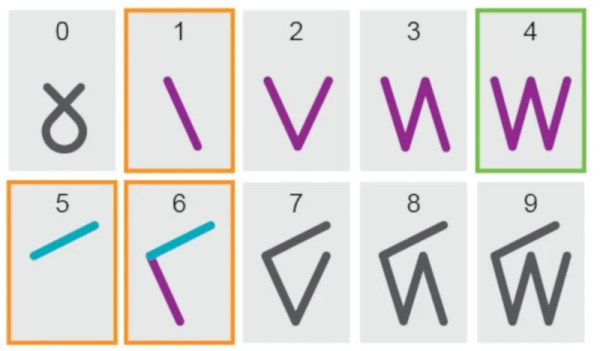

Arabic numerals are widespread and near universal in the modern world, but except perhaps for the number “1”, are simply symbols representing ideas. They require users to understand these quantities before being able to engage with the underlying mathematical structure of this base-10 system. But not only are there other bases, but other ways of writing numbers. In the case of the Iñupiaq language, which is a base-20 system, the characters for the numbers are expressed in a way in which information about the numbers themselves can be extracted from their visual representation.

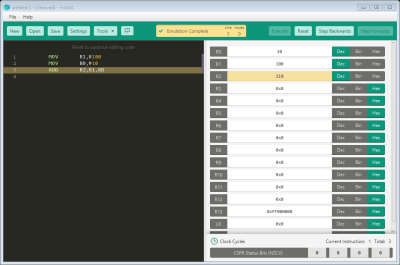

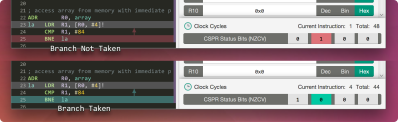

This leads to some surprising consequences, largely that certain operations like addition and subtraction and even long division can be strikingly easy to do since the visual nature of the characters makes it obvious what each answer should be. Often the operations can be seen as being done to the characters themselves, instead of in the Arabic system where the idea of each number must be known before it can be manipulated in this way.

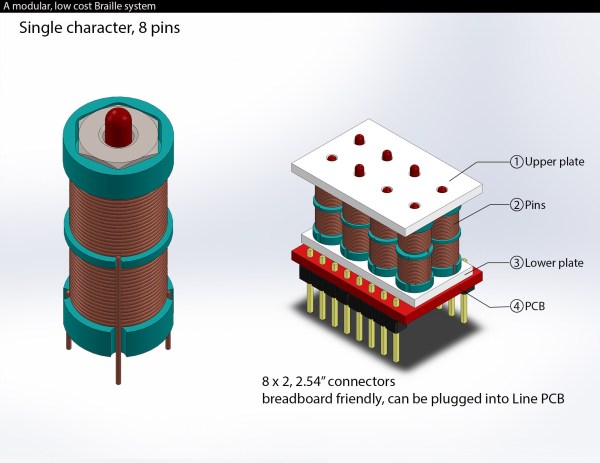

This project was originally started as a way to make sure that the Iñupiaq language and culture wasn’t completely lost after centuries of efforts to eradicate it and other native North American cultures. But now it may eventually get its own set of Unicode characters, meaning that it could easily be printed in textbooks and used in computer programming, opening up a lot of doors not only for native speakers of the language but for those looking to utilize its unique characteristics to help students understand mathematics rather than just learn it.