Even in a world that is as currently far off the rails as this one is, we’re going to go out on a limb and say that this machine learning, servo-powered prayer bot is going to be the strangest thing you see today. We’re happy to be wrong about that, though, and if we are, please send links.

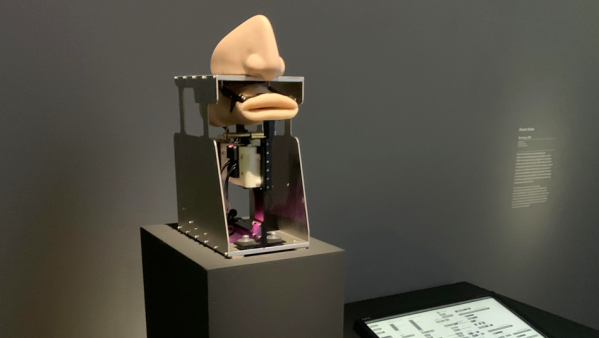

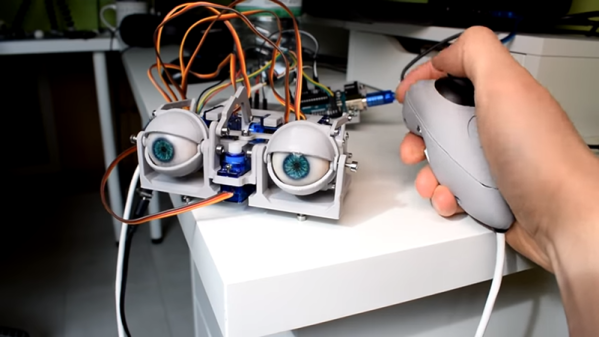

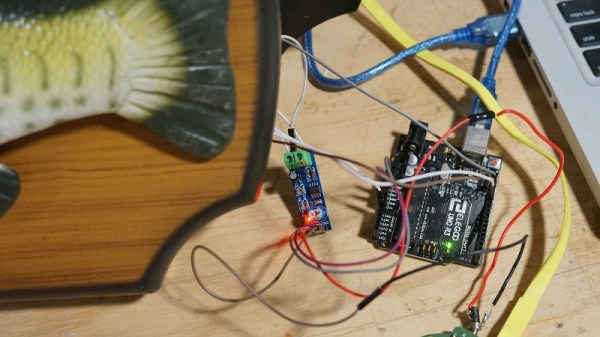

“The Prayer,” as [Diemut Strebe]’s work is called, may look strange, but it’s another in a string of pieces by various artists that explores just what it means to be human at a time when machines are blurring the line between them and us. The hardware is straightforward: a silicone rubber representation of a human nasopharyngeal cavity, servos for moving the lips, and a speaker to create the vocals. Those are generated by a machine-learning algorithm that was trained against the sacred texts of many of the world’s major religions, including the Christian Bible, the Koran, the Baghavad Gita, Taoist texts, and the Book of Mormon. The algorithm analyzes the structure of sacred verses and recreates random prayers and hymns using Amazon Polly that sound a lot like the real thing. That the lips move in synchrony with the ersatz devotions only adds to the otherworldliness of the piece. Watch it in action below.

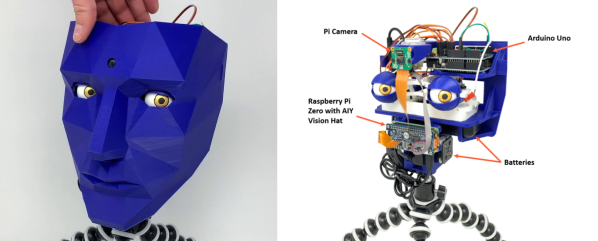

We’ve featured several AI-based projects that poke at some interesting questions. This kinetic sculpture that uses machine learning to achieve balance comes to mind, while AI has even been employed in the search for spirits from the other side.

Continue reading “Silicone And AI Power This Prayerful Robotic Intercessor”