If you flew or drove anything remote controlled until the last few years, chances are very good that you’d be using some faceless corporation’s equipment and radio protocols. But recently, open-source options have taken over the market, at least among the enthusiast core who are into squeezing every last bit of performance out of their gear. So why not take it one step further and roll your own complete system?

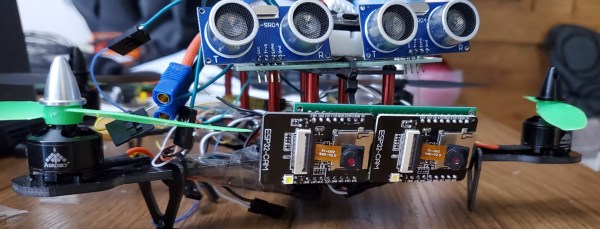

Apparently, that’s what [Malcolm Messiter] was thinking when, during the COVID lockdowns, he started his own RC project that he’s calling LockDownRadioControl. The result covers the entire stack, from the protocol to the transmitter and receiver hardware, even to the software that runs it all. The 3D-printed remote sports a Teensy 4.1 and off-the-shelf radio modules on the inside, and premium FrSky hardware on the outside. He’s even got an extensive folder of sound effects that the controller can play to alert you. It’s very complete. Heck, the transmitter even has a game of Pong implemented so that you can keep yourself amused when it’s too rainy to go flying.

Of course, as we alluded to in the beginning, there is a healthy commercial infrastructure and community around other open-source RC projects, namely ExpressLRS and OpenTX, and you can buy gear that runs those software straight out of the box, but it never hurts to have alternatives. And nothing is easier to customize and start hacking on than something you built yourself, so maybe [Malcolm]’s full-stack RC solution is right for you? Either way, it’s certainly impressive for a lockdown project, and evidence of time well spent.

Thanks [Malcolm] for sending that one in!