One of the cringier aspects of AI as we know it today has been the proliferation of deepfake technology to make nude photos of anyone you want. What if you took away the abstraction and put the faker and subject in the same space? That’s the question the NUCA camera was designed to explore. [via 404 Media]

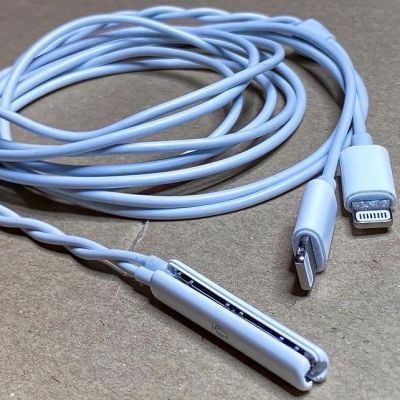

[Mathias Vef] and [Benedikt Groß] designed the NUCA camera “with the intention of critiquing the current trajectory of AI image generation.” The camera itself is a fairly unassuming device, a 3D-printed digital camera (19.5 × 6 × 1.5 cm) with a 37 mm lens. When the camera shutter button is pressed, a nude image is generated of the subject.

The final image is generated using a mixture of the picture taken of the subject, pose data, and facial landmarks. The photo is run through a classifier which identifies features such as age, gender, body type, etc. and then uses those to generate a text prompt for Stable Diffusion. The original face of the subject is then stitched onto the nude image and aligned with the estimated pose. Many of the sample images on the project’s website show the bias toward certain beauty ideals from AI datasets.

Looking for more ways to use AI with cameras? How about this one that uses GPS to imagine a scene instead. Prefer to keep AI out of your endeavors to invade personal space? How about building your own TSA body scanner?