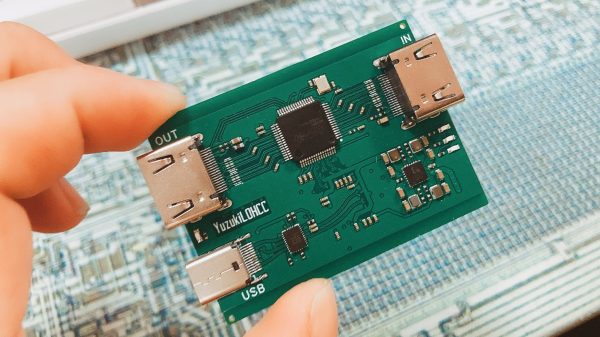

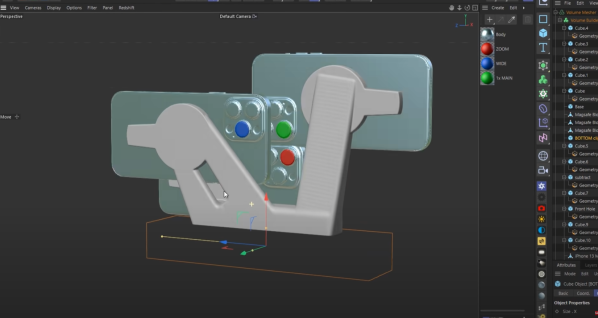

[YuzukiHD] has provided files for anyone that wishes to build their own HDMI capture card at home. The design is known as the Yuzuki Loop Out HDMI Capture Card PRO, or YuzukiLOHCC PRO for short.

The build is based on the MS2130, a HD video and audio capture chip that’s compatible with USB 3.2 Gen 1. We’re pretty sure that’s now called USB 3.2 Gen 1×1, and that standard is capable of transfers at up to 5 Gbps. Thus, the chip can support HDMI at up to 4K resolution at 60 Hz depending on the exact signals being passed down the line. It’s compatible with YUV422 & MJPEG modes and can be used with software like OBS Studio and FFmpeg. The board itself is relatively simple. It features an HDMI In port, an HDMI Out port, and a USB-C port for hooking up to a computer for capture.

HDMI capture cards can be expensive and fussy things, so you may find it pays to roll your own. Plus, being open sourced under the CERN Open Hardware License V2 means that you can make changes to suit your own use case if you so desire.

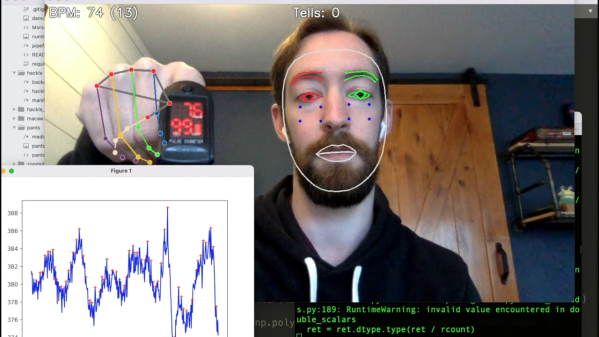

We’ve seen some other hilarious video capture tricks over the years, such as a convoluted rig that uses a SNES to turn a Game Boy Camera into a usable webcam. If you’ve got any such madcap hacks brewing up in your lab, be sure to let us know!