Anyone who has played an online shooter game in the past two or three decades has almost certainly come across a person or machine that cheats at the game by auto-aiming. For newer games with anti-cheat, this is less of a problem, but older games like Team Fortress have been effectively ruined by these aimbots. These types of cheats are usually done in software, though, and [Kamal] wondered if he would be able to build an aim bot that works directly on the hardware instead.

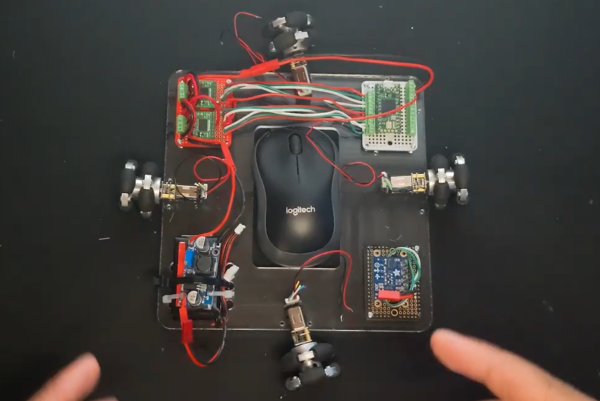

First, we’ll remind everyone frustrated with the state of games like TF2 that this is a proof-of-concept robot that is unlikely to make any aimbots worse or more common in any games. This is mostly because [Kamal] is training his machine to work in Aim Lab, a first-person shooter training simulation, and not in a real multiplayer videogame. The robot works by taking a screenshot of his computer in Python and passing the information through a computer vision algorithm which recognizes high-contrast targets. From there a PID controller is used to tell a series of omniwheels attached to the mouse where to point, and when the cursor is in the hitbox a mouse click is triggered.

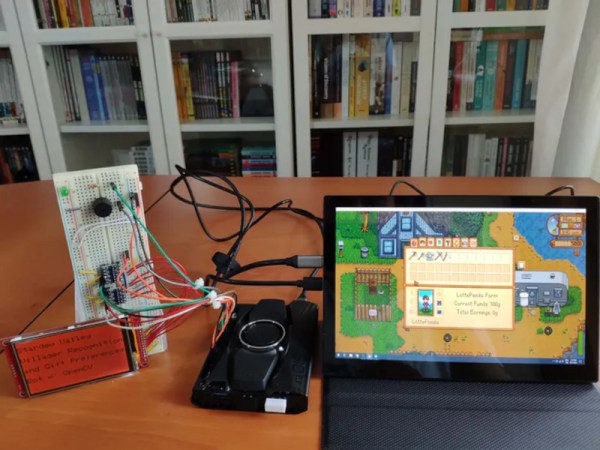

While it might seem straightforward, building the robot and then, more importantly, tuning the PID controller took [Kamal] over two months before he was able to rival pro-FPS shooters at the aim trainer. It’s an impressive build though, and if one of his omniwheel motors hadn’t burned out it may have exceeded the top human scores on the platform. If you would like a bot that makes you worse at a game instead of better, though, head over to this build which plays Valorant by using two computers to pass game information between.