One of the first logic circuits most of us learn about is the humble flip-flop. They’re easy enough to build with just a couple of NOR or NAND gates, and even building one up from discrete components isn’t too much of a chore. But building a flip-flop from chemicals and lasers is another thing entirely.

That’s the path [Markus Bindhammer] took for his photochromic molecular switch. We suspect this is less of an attempt at a practical optical logic component and more of a demonstration project, but either way, it’s pretty cool. Photochromism is the property by which molecules reversibly rearrange themselves and change color upon exposure to light, the most common example being glass that darkens automatically in the sun. This principle can be used to create an optical flip-flop, which [Markus] refers to as an “RS” type but we’re pretty sure he means “SR.”

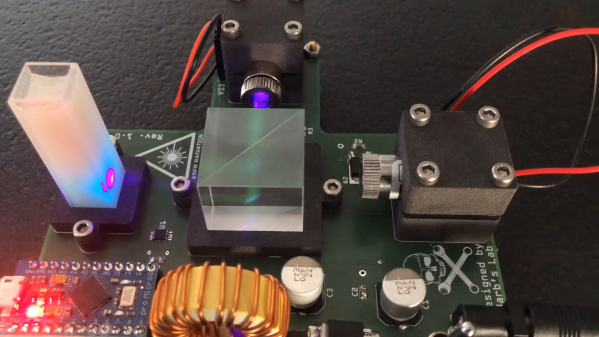

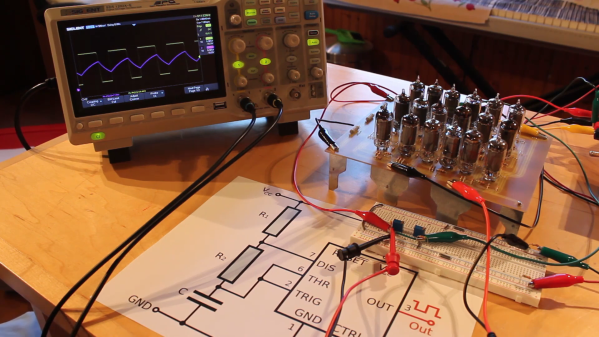

The electronics for this are pretty simple, with two laser modules and their drivers, a power supply, and an Arduino to run everything. The optics are straightforward as well — a beam splitter that directs the beams from each laser onto the target, which is a glass cuvette filled with a clear epoxy resin mixed with a photochromic chemical. [Markus] chose spiropyran as the pigment, which when bathed in UV light undergoes an intramolecular carbon-oxygen bond breakage that turns it into the dark blue pigment merocyanine. Hitting the spot with a red laser or heating the cuvette causes the C-O bond to reform, fading the blue spot.

The video below shows the intensely blue dot spot developing under UV light and rapidly fading thanks to just the ambient temperature. To make the effect last longer, [Markus] cools the target with a spritz from a CO2 cartridge. We imagine other photochromic chemicals could also be employed here, as could some kind of photometric sensor to read the current state of the flip-flop. Even as it is, though, this is an interesting way to put chemistry and optics to work.

Continue reading “This Unique Flip-Flop Uses Chemistry And Lasers” →