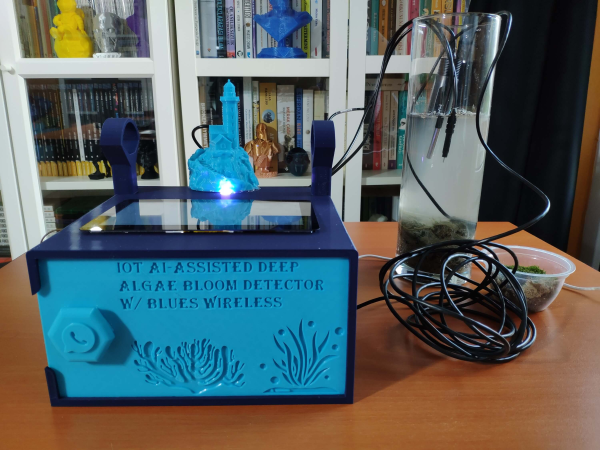

If you live in a place where you can buy Arduinos and Raspberry Pis locally, you probably don’t spend much time worrying about your water supply. But in some parts of the world, it is nothing to take for granted, bad water accounts for as many as 500,000 deaths worldwide every year. Scientists have reported a graphene sensor they say costs a buck and can detect dangerous bacteria and heavy metals in drinking water.

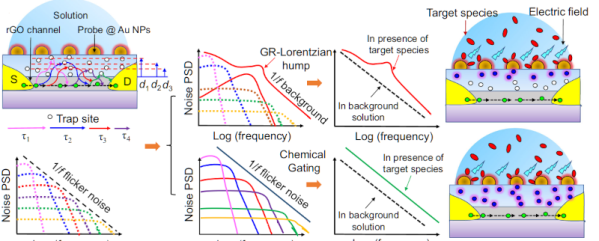

The sensor uses a GFET — a graphene-based field effect transistor to detect lead, mercury, and E. coli bacteria. Interestingly, the FETs transfer characteristic changes based on what is is exposed to. We were, frankly, a bit surprised that this is repeatable enough to give you useful data. But apparently, it is especially when you use a neural network to interpret the results.

What’s more, there is the possibility the device could find other contaminants like pesticides. While the materials in the sensor might have cost a dollar, it sounds like you’d need a big equipment budget to reproduce these. There are silicon wafers, spin coating, oxygen plasma, and lithography. Not something you’ll whip up in the garage this weekend.

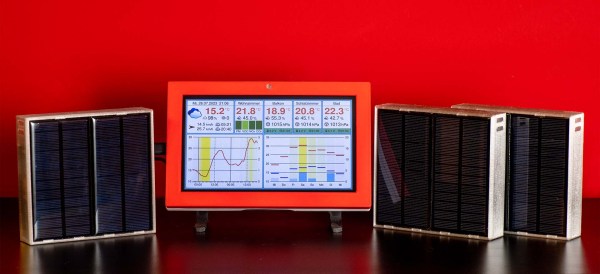

Still, it is interesting to see a FET used this way and a cheap way to monitor water quality would be welcome. Using machine learning with water sensors isn’t a new idea. Of course, the sensor is one part of the equation. Monitoring is the other.