Some careful measuring and a little extra effort can be all that separates what looks like a hack job from a slick end product, and that is apparent in [Eric Sorensen]’s classy retrogaming rig, complete with ports for original console controllers.

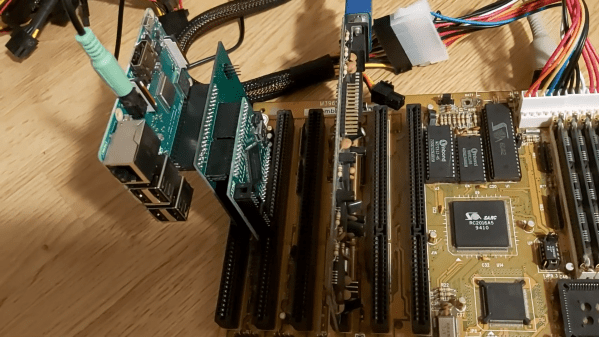

[Eric] likes his vintage gaming, and was terrifically pleased with MiSTer, an open-source project that recreates various classic computers, game consoles and arcade machines using modern FPGA-based hardware. Of course, what makes retro gaming even better is using a platform’s genuine original controllers, which just takes a little extra hardware and wiring.

But [Eric] found that all the required accessories and peripherals started to look awfully cluttered. He solved this issue by packing everything carefully into a specialty PC case called the Checkmate A1500 Plus, which gives off a strong 80s design vibe. As a bonus, the front panels are all removable and that’s where [Eric] decided to house the custom controller ports.

First [Eric] carefully measured each controller connector to create CAD models, then designed matching front panels to house the connectors and 3D printed them. Once that was done, post-processing the panels was a long process of apply Bondo, sand, paint, and repeat as needed. The results looks fantastic, and this project is a prime example of how aesthetics and finish can matter.

First [Eric] carefully measured each controller connector to create CAD models, then designed matching front panels to house the connectors and 3D printed them. Once that was done, post-processing the panels was a long process of apply Bondo, sand, paint, and repeat as needed. The results looks fantastic, and this project is a prime example of how aesthetics and finish can matter.

Find yourself in a similar situation? [Tom Nardi] has shown us all that 3D prints don’t have to look 3D-printed, and careful application of paint and primer can really put the ‘pro’ in prototyping.