Compared to their manual counterparts, electric wheelchairs are far less demanding to operate, as the user doesn’t need to have upper body strength normally required to turn the wheels. But even a motorized wheelchair needs some kind of input from the user to control it, which still may pose a considerable challenge depending on the individual’s specific abilities.

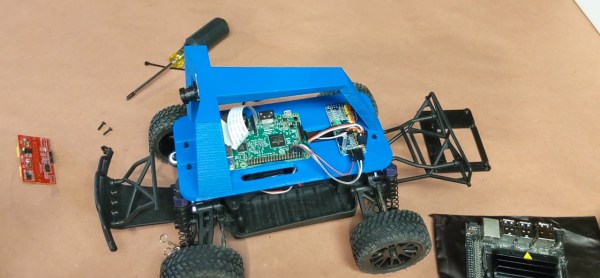

Hoping to improve on the situation, [Kabilan KB] has developed a self-driving electric wheelchair that can navigate around obstacles by feeding the output of an Intel RealSense Depth Camera and LiDAR module into a Jetson Nano Developer Kit running OpenCV. To control the actual motors, the Jetson is connected to an Arduino which in turn is wired into a common L298N motor driver board.

As [Kabilan] explains on the NVIDIA Blog, he specifically chose off-the-shelf components and the most affordable electric wheelchair he could find to bring the total cost of the project as low as possible. An undergraduate from the Karunya Institute of Technology and Sciences in Coimbatore, India, he notes that this sort of assistive technology is usually only available to more affluent patients. With his cost-saving measures, he hopes to address that imbalance.

As [Kabilan] explains on the NVIDIA Blog, he specifically chose off-the-shelf components and the most affordable electric wheelchair he could find to bring the total cost of the project as low as possible. An undergraduate from the Karunya Institute of Technology and Sciences in Coimbatore, India, he notes that this sort of assistive technology is usually only available to more affluent patients. With his cost-saving measures, he hopes to address that imbalance.

While automatic obstacle avoidance would already be a big help for many users, [Kabilan] imagines improved software taking things a step further. For example, a user could simply press a button to indicate which room of the house they want to move to, and the chair could drive itself there automatically. With increasingly powerful single-board computers and the state of open source self-driving technology steadily improving, it’s not hard to imagine a future where this kind of technology is commonplace.