Unless you were lucky enough to be able to afford a floppy disk drive, you probably used cassette tapes to store programs and data if you used pretty much any home computer in the 1980s. ZX Spectrum users, however, had another option in the form of the Microdrive. This was a rather unusual continuous-loop mini-tape cartridge that could store around 100 kB and load it at lightning speed, all at a much lower price point than a floppy drive. The low price came at the cost of poor durability however, and after four decades it’s becoming harder and harder to find cartridges that work reliably. [Derek Fountain] therefore set out to make a modern Microdrive emulator that stores data on SD cards.

Several projects already exist to replace Microdrives, but they typically also need the ZX Interface 1, a serial/network expansion module that’s becoming equally hard to find. Hence [Derek]’s choice to make his emulator a completely standalone system that directly plugs into the Spectrum’s expansion port.

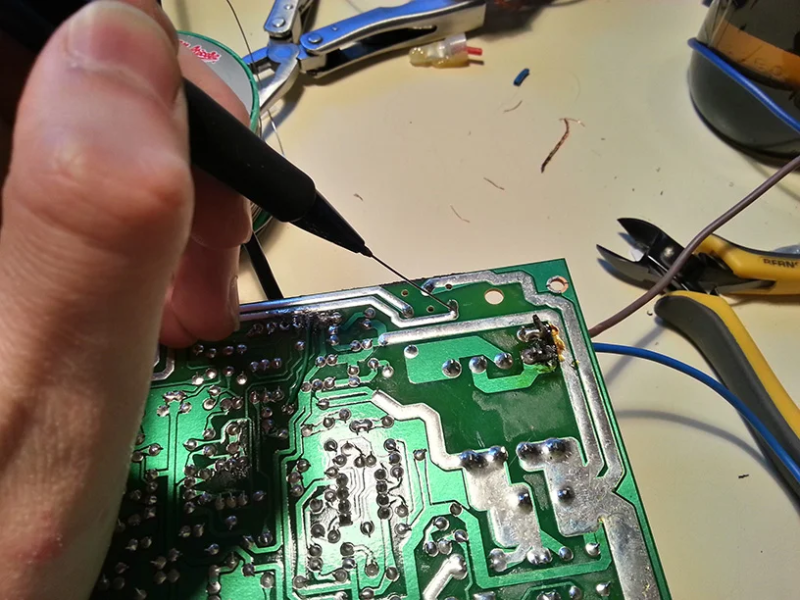

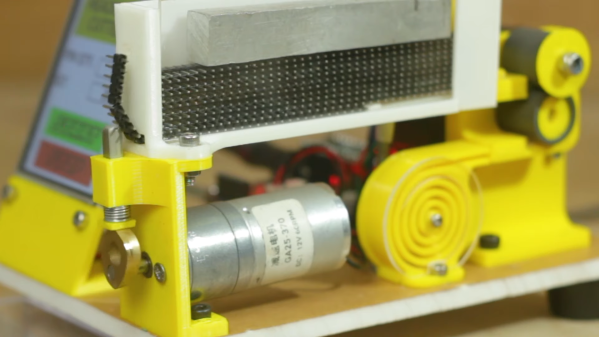

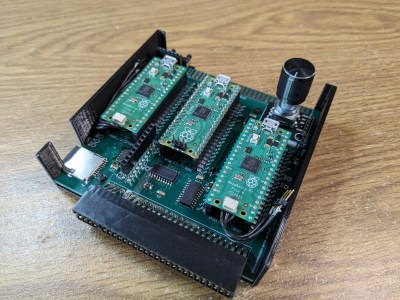

The system is housed in a 3D-printed enclosure that holds two PCBs. Three Raspberry Pi Picos run the show inside: one to hold the ZX Interface 1’s ROM image and interface with the Spectrum’s bus, another to simulate the Microdrive, and a third to run the user interface and communicate with the SD card. The user can choose between eight tape images stored in

The system is housed in a 3D-printed enclosure that holds two PCBs. Three Raspberry Pi Picos run the show inside: one to hold the ZX Interface 1’s ROM image and interface with the Spectrum’s bus, another to simulate the Microdrive, and a third to run the user interface and communicate with the SD card. The user can choose between eight tape images stored in .MDR format by using two pushbuttons and a rotary encoder, with a small OLED display showing the machine’s configuration.

While you might think that three dual-core 133 MHz ARM CPUs would run circles around the Spectrum’s Z80, it actually took quite a bit of work to get everyting running properly in real time. The 3.5 MHz bus clock rate gave the second Pico precious little time to fetch the required bytes out of its flash memory. Its RAM was fast enough for that, but too small to hold all eight tape images at the same time. In the end, [Derek] settled on using a separate 8 MB SPI DRAM chip that could easily keep up the data rate, with the Pi just using its GPIO ports to shuttle the data around.

All source code and extensive documentation are available on Derek’s excellent blog post and GitHub page. Be sure to also check out [Jenny]’s detailed review and teardown if you’d like to know more about the weird and wonderful Microdrive system.

Thanks for the tip, [Andrew]! Continue reading “A Modern Replacement For The ZX Spectrum’s Odd Tape Storage System”