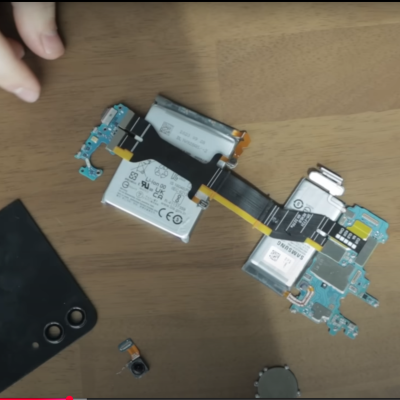

PostmarketOS is a Linux distribution specifically designed for those who wish to repurpose old smartphones as general-use computers, to a degree. This can be a great way to reuse old hardware. However, for [Bry50], it was somewhat discomforting leaving the phone’s aging lithium battery perpetually on charge. A bit of code was thus whipped up to provide a greater measure of safety.

The concept is simple enough—lithium batteries are at lower risk of surprise combustion events if they’re held at a lower state of charge. To this end, [Bry50] modified the device tree in PostmarketOS to change the maximum charge level. Apparently, maximum charge was set at a lofty 4.4V (100%), but this was reconfigured to a lower level of 3.8V, corresponding to a roughly 40-50% state of charge. The idea is that this is a much healthier way to maintain a battery hooked up to power for long periods of time. There’s one small hitch—the system will get confused if the battery voltage is higher than the 3.8 V setpoint when switching over. It’s thus important to let the device discharge to a lower level if you choose to make this change.

It’s a neat mod that both increases safety, but keeps the battery on hand to let the system ride through minor power outages. If you’re new to the world of repurposing old smartphones, fear not. [Bryan] also has a tutorial on getting started with PostmarketOS for the unfamiliar. If you’re working on your own projects in this space, we’d love to hear about them—so get on over to the tipsline!