[Carl] just found a yet another use for the RTL-SDR. He’s been decoding Inmarsat STD-C EGC messages with it. Inmarsat is a British satellite telecommunications company. They provide communications all over the world to places that do not have a reliable terrestrial communications network. STD-C is a text message communications channel used mostly by maritime operators. This channel contains Enhanced Group Call (EGC) messages which include information such as search and rescue, coast guard, weather, and more.

Not much equipment is required for this, just the RTL-SDR dongle, an antenna, a computer, and the cables to hook them all up together. Once all of the gear was collected, [Carl] used an Android app called Satellite AR to locate his nearest Inmarsat satellite. Since these satellites are geostationary, he won’t have to move his antenna once it’s pointed in the right direction.

As far as antennas go, [Carl] recommends a dish or helix antenna. If you don’t want to fork over the money for something that fancy, he also explains how you can modify a $10 GPS antenna to work for this purpose. He admits that it’s not the best antenna for this, but it will get the job done. A typical GPS antenna will be tuned for 1575 MHz and will contain a band pass filter that prevents the antenna from picking up signals 1-2MHz away from that frequency.

To remove the filter, the plastic case must first be removed. Then a metal reflector needs to be removed from the bottom of the antenna using a soldering iron. The actual antenna circuit is hiding under the reflector. The filter is typically the largest component on the board. After desoldering, the IN and OUT pads are bridged together. The whole thing can then be put back together for use with this project.

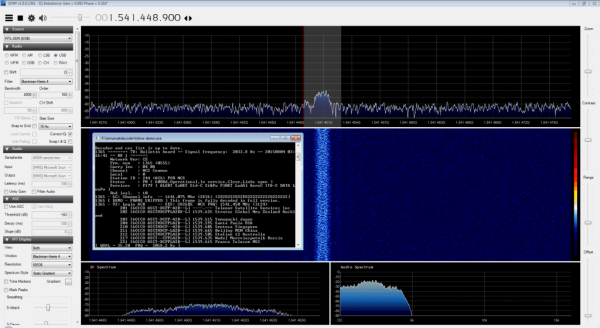

Once everything was hooked up and the antenna was pointed in the right place, the audio output from the dongle was piped into the SDR# tuner software. After tuning to the correct frequency and setting all of the audio parameters, the audio was then decoded with another program called tdma-demo.exe. If everything is tuned just right, the software will be able to decode the audio signal and it will start to display messages. [Carl] posted some interesting examples including a couple of pirate warnings.

If you can’t get enough RTL-SDR hacks, be sure to check out some of the others we’ve featured in the past. And don’t forget to send in links to your own hacking!